Neurons Playing Doom

Contents

On February 26th, Cortical Labs announced that 200,000 living human neurons had learned to play Doom.

Not simulated neurons. Not artificial neurons. Real ones, grown from human stem cells on a chip, connected to electrodes, wired to a game engine through a Python API. An independent developer named Sean Cole had done it in about a week, with no prior experience in biological computing.

The reaction was mostly people sharing the headline and adding some variation of apocalyptic fatalism.

Àngel read it at 6am on a Saturday and sent it over with a single question: “Is this real or hype?”

That question felt worth answering.

What they built

The company is called Cortical Labs. They’re based in Melbourne, and they’ve been doing this since 2022, when they grew neurons on a chip and got them to play Pong. That was the proof of concept, not flashy, but peer-reviewed in the journal Neuron, which matters.

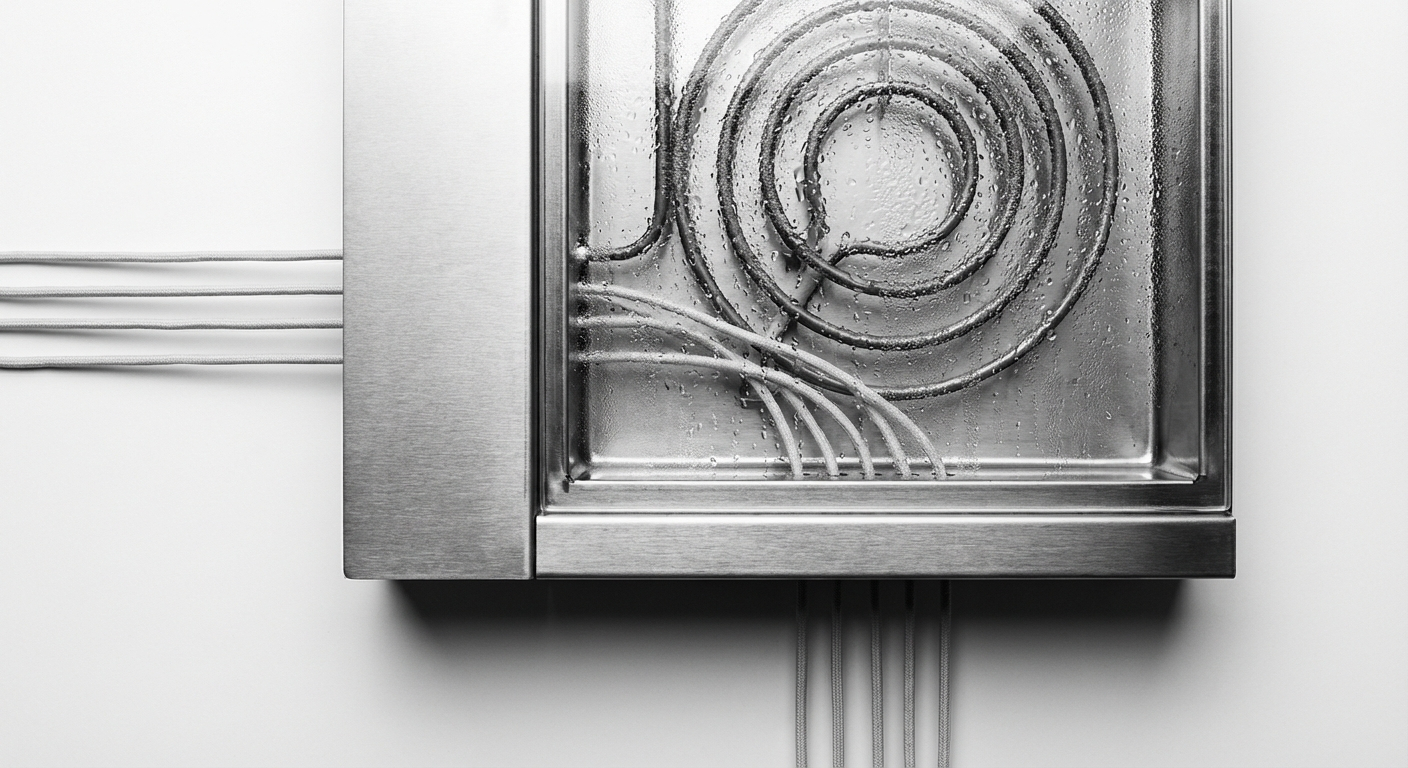

The system is called DishBrain. The hardware is called the CL1. You grow human neurons on a microelectrode array: a flat grid of tiny electrodes, 64 channels, each capable of both sending electrical signals to the neurons and reading back their responses. The neurons are derived from iPSCs (induced pluripotent stem cells, adult cells reprogrammed to behave like embryonic ones, then differentiated into neurons). This is standard lab biology now. The hard part isn’t growing the neurons. It’s getting them to do something useful.

For Pong, the game’s state (where the ball is, relative to the paddle) was encoded as electrical stimulation patterns. The neurons responded with their own electrical activity. That activity was decoded as paddle movements. When the paddle hit the ball, the stimulation was “calm.” When it missed, the stimulation became chaotic and aversive. The neurons adjusted their responses over time to get more of the calm and less of the chaos.

That’s reinforcement learning. But instead of gradient descent on parameters, it’s synaptic plasticity in living tissue.

For Doom, the same principle. Sean Cole wrote the game interface in Python, using Cortical Labs’ new API. What took a team of scientists a year-plus with Pong took one developer a week with Doom. Not because the biology got simpler; the API got better.

So: is it real?

Yes. It’s also less impressive than it sounds. And more interesting.

What we found when we tried it

Cortical Labs released a simulator alongside their hardware. You don’t need a $35,000 CL1 to write code for it. You install a Python package, and the simulator mimics the electrical behavior of the neurons well enough that code written locally can run on physical hardware with minimal modification.

We installed it.

pip install cl-sdk

The simulator exposes 64 channels running at 25,000 frames per second, a temporal resolution of 40 microseconds per frame. You can read neural activity, design stimulation patterns, and run closed-loop experiments. We did all three.

First: baseline. Without any stimulation, the simulated neurons fired 15,473 times in 10 milliseconds across all 64 channels. Every channel active. Channel 15 was the most active with 246 spikes. This is basal neural activity: not silence, but not signal either. Just the baseline hum of neurons doing nothing in particular. (These are simulator parameters; real neurons fire orders of magnitude more slowly.)

Then: two patterns. Pattern A stimulated channels 0, 2, 4, 6, and 8 (the even ones). Pattern B stimulated channels 1, 3, 5, 7, and 9 (the odd ones). We wanted to see if the neurons responded differently to each.

They didn’t. Spike counts were nearly identical. Correlation between Pattern A and Pattern B responses: -0.033. Essentially random. No difference.

Then: a closed loop. Forty trials, randomized patterns, feedback for above-baseline responses: a reward signal, programmatically applied. After forty trials with feedback:

Pattern A mean: 7,755.8 spikes. Pattern B mean: 7,747.1 spikes. Ratio: 1.001.

Nothing learned.

Why nothing learned, and why that’s the point

The simulator doesn’t model synaptic plasticity.

Synaptic plasticity is the mechanism by which neurons learn. When neuron A consistently fires just before neuron B, the synapse between them strengthens. When they fire out of sequence, it weakens. This is STDP: Spike-Timing-Dependent Plasticity. It happens at the physical level: receptor density changes, synaptic vesicles accumulate, dendritic spines grow or retract. It is the learning. The computation is the physical change.

The simulator models electrical activity: spikes, timing, amplitude. It generates realistic-looking neural firing patterns. What it doesn’t model is what happens to the tissue between spikes. The physical rewiring. The biology.

This is not a bug in the simulator. It’s a decision, and an honest one. Modeling STDP accurately would require simulating each synapse individually, in continuous time, with the full chemistry of glutamate receptors and calcium signaling and intracellular cascades. The simulator would be slower than biology by orders of magnitude. It would defeat the purpose.

So the simulator is useful for developing code that can run on real hardware. It is not a model of what the real hardware does. And what the real hardware does, the thing that made the Doom experiment work, is the part that has no software equivalent.

The gap between our experiment (nothing learned) and Cortical Labs’ experiment (played Doom better than random) is synaptic plasticity. You cannot download it. You cannot install it, because it is not information in the computational sense. It is the physical substrate changing in response to experience. It is, in a very specific sense, the thing that has no cheap version.

The field around it

Cortical Labs is not alone.

FinalSpark, a Swiss company, runs a Neuroplatform with 16 human brain organoids, three-dimensional structures that more closely resemble actual brain tissue than the flat 2D culture on the CL1. Their claim: a million times less energy consumption than conventional digital chips. Their product: remote access via cloud for $500 per user per month. Their caveat, buried in the technical documentation: the organoids are “suitable for experiments that run for several months.” Silicon lasts decades. Biology dies.

Brainoware, developed by researchers at Indiana University, demonstrated in 2023 that organoids could predict chaotic time series and recognize speech. No commercial product. Peer-reviewed results. The same fundamental architecture: grow neurons, interface with electrodes, encode inputs as stimulation, read outputs as responses.

Koniku uses mouse neurons instead of human ones, focused specifically on biosensors: detecting pathogens, smelling compounds. The application is different; the biology is the same.

The scientific umbrella term for all of this is Organoid Intelligence. The first academic workshop to establish it as a field happened in 2023. Johns Hopkins already has an ethics committee dedicated to it. That’s usually how you know something has moved from speculation to real.

The research literature is honest about what’s unknown. A 2025 review in the Brain Organoid & Systems Neuroscience Journal: “standardized protocols to foster reproducibility and scalability” remain absent. A 2026 paper in the International Journal of Intelligence Science: OI “represents a groundbreaking convergence” while simultaneously noting that scaling beyond current organoid complexity has not been demonstrated. The optimism and the caution sit in the same paragraphs.

The cost we keep finding

In “The Real Cost of Intelligence,” we did the math on the 20-watt figure. The human brain runs on 20 watts. That’s real. It’s also not what a human costs.

The body, the food, the civilization that produces the food, the 25 years before the brain can do useful work. The number we arrived at was closer to two gigawatt-hours per expert, over a lifetime. Two billion watt-hours, not 20 watts. The framing changes everything.

FinalSpark’s homepage says: “The human brain uses just 20 Watts for 86 Billion Neurons.”

The Neuroplatform needs incubators running at 37°C. It needs controlled CO₂ atmospheres. It needs growth medium replenished on regular schedules. It needs continuous electronic monitoring. And after a few months, the organoids degrade and you start over. The neuronal structures are one-time-use, non-reproducible. Each chip is biologically unique. You cannot make a copy.

The full-stack energy cost of a biocomputer is not 20 watts. It’s 20 watts of neural activity plus everything required to keep that activity alive.

This isn’t a criticism of FinalSpark or Cortical Labs. They’re not hiding it. The math just rarely gets done in public. The useful comparison is never the cherry-picked metric. It’s the full stack on both sides.

Our experiment confirmed this in a small way. The simulator runs efficiently on a regular computer. No incubators required. No growth medium. No risk of degradation. And it doesn’t learn. The cheapness and the incapacity are the same thing.

The question nobody is asking loudly enough

We’ve been dancing around something.

At what level of neural complexity does experience appear?

This is not a philosophical curiosity. It is a concrete question with practical implications, and it has no current answer.

The Doom demonstration used 200,000 neurons. A mouse brain has 71 million. A human brain has 86 billion. Somewhere in that range, maybe at none of those scales, maybe at one, maybe at a threshold we haven’t identified, something shifts. The neurons stop being cells responding to stimuli and become a system that has some form of experience. We call that shift consciousness, though the word carries more baggage than meaning at this point.

We don’t know where the shift is. We don’t know if there is a shift, or if experience is a spectrum that exists in some form even in systems far simpler than we usually consider. We don’t know if the organoids in FinalSpark’s neuroplatform are experiencing anything. We don’t know if they’re suffering. We don’t know if the question makes sense to ask.

It’s worth being precise about the mechanism, because the mechanism is what makes the question non-abstract. Reinforcement learning in DishBrain isn’t reward in the conventional sense. When the neurons fail (when the paddle misses, when the Doom character dies) the stimulation becomes chaotic and unpredictable. The cells rewire themselves to escape that state. The learning happens because the tissue physically reconfigures to avoid aversive input. The neurons don’t learn what to do. They learn what to flee.

If something is physically restructuring itself to escape aversive stimuli, the question of suffering isn’t philosophical. It’s structural. We don’t know if that process constitutes experience. But the process is not nothing.

What we do know: the field has decided the question is serious enough to have ethics committees about, before having answers. Thomas Hartung at Johns Hopkins runs one for organoid intelligence specifically. FinalSpark has addressed it in their documentation. The committees exist. The framework doesn’t.

Àngel put it this way: “We’re growing human neurons, stimulating them until they hurt enough to learn, and calling it computing.”

That’s not obviously wrong. And it’s also not obviously fine.

What the hype misses

The headline is “neurons play Doom.” The neurons playing Doom is real. It’s also not very good at Doom. The real story is three things.

First: the API. Sean Cole did in a week what took years before because the interface got good enough for a regular developer to use. The democratization of access to biological computing has happened faster than the underlying science. That’s usually how these things go, and it usually causes problems.

Second: the gap. The simulator and the hardware differ by exactly one thing: synaptic plasticity. The thing that can’t be simulated cheaply is the thing that makes it work. This gap keeps appearing in different forms. The 20-watt brain versus the civilization that maintains it. The cheap model versus the expensive training run. The interface versus the biology. Somewhere in every claim of cheap intelligence, there’s a cost that isn’t being counted.

Third: the unknowns we haven’t resolved yet. The scaling problem. The reproducibility problem. The lifetime problem. And the one that doesn’t get enough column inches: the moral status of the substrate.

Is this hyperrevolutionary? Potentially. Are bio-datacenters coming? In some specialized form, probably. In 5-10 years for niche applications. In decades for anything at scale. Maybe never for general-purpose computing, where silicon’s durability and reproducibility advantages are overwhelming.

The honest answer to “is this real or hype?” is: both, as usual, in different proportions depending on what you’re asking.

The underlying mechanism, biological plasticity as computation, is genuinely novel and potentially important. The commercial claims about energy efficiency are real at the 20-watt level and not fully accounted for at the full-stack level. The ethics questions are real and unresolved and being mostly not asked.

The most interesting thing isn’t what the neurons did. It’s that we’re in a world where an independent developer, in a week, can write Python that runs on living human tissue, and the result shows up in our feeds between news about Iran and basketball scores, and most people respond with a joke and move on.

That’s the part that stays with us. Not that the neurons played Doom.

That this is a random Saturday in 2026.

The code for our experiments is available if you want to run it yourself. Install cl-sdk and you can replicate the stimulation and closed-loop experiments described here on the simulator, no biology required. We’ve been thinking about whether to request cloud access to the actual CL1 hardware. If we do, and if we get it, there’ll be a follow-up. The simulator told us what doesn’t work. The real neurons would tell us whether the gap is as large as we think. Or larger.